The architects of UX are facing a daunting new challenge:

How do you integrate AI into the seamless user experience you’ve strived to create?

More specifically, how should users view AI-generated content? How can you make it engaging without being too obtrusive? How can you demonstrate that AI-generated information is credible?

The key to creating a positive user experience around AI is trust. If they don’t trust it, your users will not see your product as valid or reliable.

Building AI software may not fall under UX designers’ job description, but integrating it into the user experience has become a principal UX design challenge.

It makes sense why not. The media covers AI in somewhat elusive, abstract terms. Even tech publications tend to talk about it without really explaining what it is. (You can find a good explainer in this article from Futurism.)

Because of this, AI represents a type of mystical, inexplicable phenomenon. It captivates audiences because it appeals to their sense of wonder.

But a thin line separates this wonder from fear.

That’s why movies tend to portray AI as such a threatening force. Nightmares of a future where robots dominate human society are a recurring theme (think: I, Robot).

The reason AI can be so fascinating and scary at the same time boils down to lack of trust.

It makes sense why not. The media covers AI in somewhat elusive, abstract terms. Even tech publications tend to talk about it without really explaining what it is. (You can find a good explainer in this article from Futurism.)

Because of this, AI represents a type of mystical, inexplicable phenomenon. It captivates audiences because it appeals to their sense of wonder.

But a thin line separates this wonder from fear.

That’s why movies tend to portray AI as such a threatening force. Nightmares of a future where robots dominate human society are a recurring theme (think: I, Robot).

The reason AI can be so fascinating and scary at the same time boils down to lack of trust.

Humans determine whether or not to trust one another based on factors like reliability, sincerity, competence, and intent.

Humans’ trust in machines depends on accuracy, consistency, and fallibility. For many people, trust depends on their ability to interpret how the system works.

AI complicates this.

It possesses human reasoning and cognitive faculties, yet it lacks interpretability. It can be configured to output insights beyond human computability, free of human error. At the same time, a flawed algorithm could lead a machine to learn to do the wrong thing. In some situations, the consequences could be catastrophic.

Humans determine whether or not to trust one another based on factors like reliability, sincerity, competence, and intent.

Humans’ trust in machines depends on accuracy, consistency, and fallibility. For many people, trust depends on their ability to interpret how the system works.

AI complicates this.

It possesses human reasoning and cognitive faculties, yet it lacks interpretability. It can be configured to output insights beyond human computability, free of human error. At the same time, a flawed algorithm could lead a machine to learn to do the wrong thing. In some situations, the consequences could be catastrophic.

You don’t need to delve into the complexities that belie the AI and machine learning capabilities your product uses. But you can raise user trust if you let them in on the data your algorithms use to produce predictions and recommendations.

For example, Netflix uses AI to curate a list of recommended shows and movies for subscribers based on what they’ve already watched.

It doesn’t label this category as “AI-Generated Recommendations,” but it does call it “Because You Watched Californication,” “Because You Watched Mad Men,” etc. It solves this UX design challenge by using the title to tell users how it came up with its suggestions.

You don’t need to delve into the complexities that belie the AI and machine learning capabilities your product uses. But you can raise user trust if you let them in on the data your algorithms use to produce predictions and recommendations.

For example, Netflix uses AI to curate a list of recommended shows and movies for subscribers based on what they’ve already watched.

It doesn’t label this category as “AI-Generated Recommendations,” but it does call it “Because You Watched Californication,” “Because You Watched Mad Men,” etc. It solves this UX design challenge by using the title to tell users how it came up with its suggestions.

Gain users’ trust while providing the optimal UX. Discover How.

Why people love and fear AI

Although AI is a major buzzword, most people don’t understand how it works. It makes sense why not. The media covers AI in somewhat elusive, abstract terms. Even tech publications tend to talk about it without really explaining what it is. (You can find a good explainer in this article from Futurism.)

Because of this, AI represents a type of mystical, inexplicable phenomenon. It captivates audiences because it appeals to their sense of wonder.

But a thin line separates this wonder from fear.

That’s why movies tend to portray AI as such a threatening force. Nightmares of a future where robots dominate human society are a recurring theme (think: I, Robot).

The reason AI can be so fascinating and scary at the same time boils down to lack of trust.

It makes sense why not. The media covers AI in somewhat elusive, abstract terms. Even tech publications tend to talk about it without really explaining what it is. (You can find a good explainer in this article from Futurism.)

Because of this, AI represents a type of mystical, inexplicable phenomenon. It captivates audiences because it appeals to their sense of wonder.

But a thin line separates this wonder from fear.

That’s why movies tend to portray AI as such a threatening force. Nightmares of a future where robots dominate human society are a recurring theme (think: I, Robot).

The reason AI can be so fascinating and scary at the same time boils down to lack of trust.

What factors determine trust in AI?

Trust between humans is based on different criteria than trust between humans and machines. Humans determine whether or not to trust one another based on factors like reliability, sincerity, competence, and intent.

Humans’ trust in machines depends on accuracy, consistency, and fallibility. For many people, trust depends on their ability to interpret how the system works.

AI complicates this.

It possesses human reasoning and cognitive faculties, yet it lacks interpretability. It can be configured to output insights beyond human computability, free of human error. At the same time, a flawed algorithm could lead a machine to learn to do the wrong thing. In some situations, the consequences could be catastrophic.

Humans determine whether or not to trust one another based on factors like reliability, sincerity, competence, and intent.

Humans’ trust in machines depends on accuracy, consistency, and fallibility. For many people, trust depends on their ability to interpret how the system works.

AI complicates this.

It possesses human reasoning and cognitive faculties, yet it lacks interpretability. It can be configured to output insights beyond human computability, free of human error. At the same time, a flawed algorithm could lead a machine to learn to do the wrong thing. In some situations, the consequences could be catastrophic.

Lack of trust in AI is the biggest UX design challenge

AI has become a central component of many companies’ customer strategies. It enables greater personalization, more access to support, and faster service — all of the tenants of a positive customer experience. But if you want your users to have a positive experience with your AI technology, they have to be able to trust it. How do you do that? Through better UX. Here are five ways to improve user trust in AI.1. Clue your users into your AI

You don’t need to delve into the complexities that belie the AI and machine learning capabilities your product uses. But you can raise user trust if you let them in on the data your algorithms use to produce predictions and recommendations.

For example, Netflix uses AI to curate a list of recommended shows and movies for subscribers based on what they’ve already watched.

It doesn’t label this category as “AI-Generated Recommendations,” but it does call it “Because You Watched Californication,” “Because You Watched Mad Men,” etc. It solves this UX design challenge by using the title to tell users how it came up with its suggestions.

You don’t need to delve into the complexities that belie the AI and machine learning capabilities your product uses. But you can raise user trust if you let them in on the data your algorithms use to produce predictions and recommendations.

For example, Netflix uses AI to curate a list of recommended shows and movies for subscribers based on what they’ve already watched.

It doesn’t label this category as “AI-Generated Recommendations,” but it does call it “Because You Watched Californication,” “Because You Watched Mad Men,” etc. It solves this UX design challenge by using the title to tell users how it came up with its suggestions.

2. Don’t disguise your AI

As AI technology develops, it will be able to take on more human tasks with higher levels of accuracy and efficiency. But you will completely undermine the trust of your users if you try to make them believe they are communicating with a human when it’s really a computer. On your website, you can differentiate AI-generated content with icons or labels. It’s important to make this distinction on the phone, too. As natural language processing technology improves, callers will be able to speak normally to automated customer support reps. But deceiving them into believing they are talking to a human will erode their trust and create a frustrating experience.3. Don’t make it too presumptuous

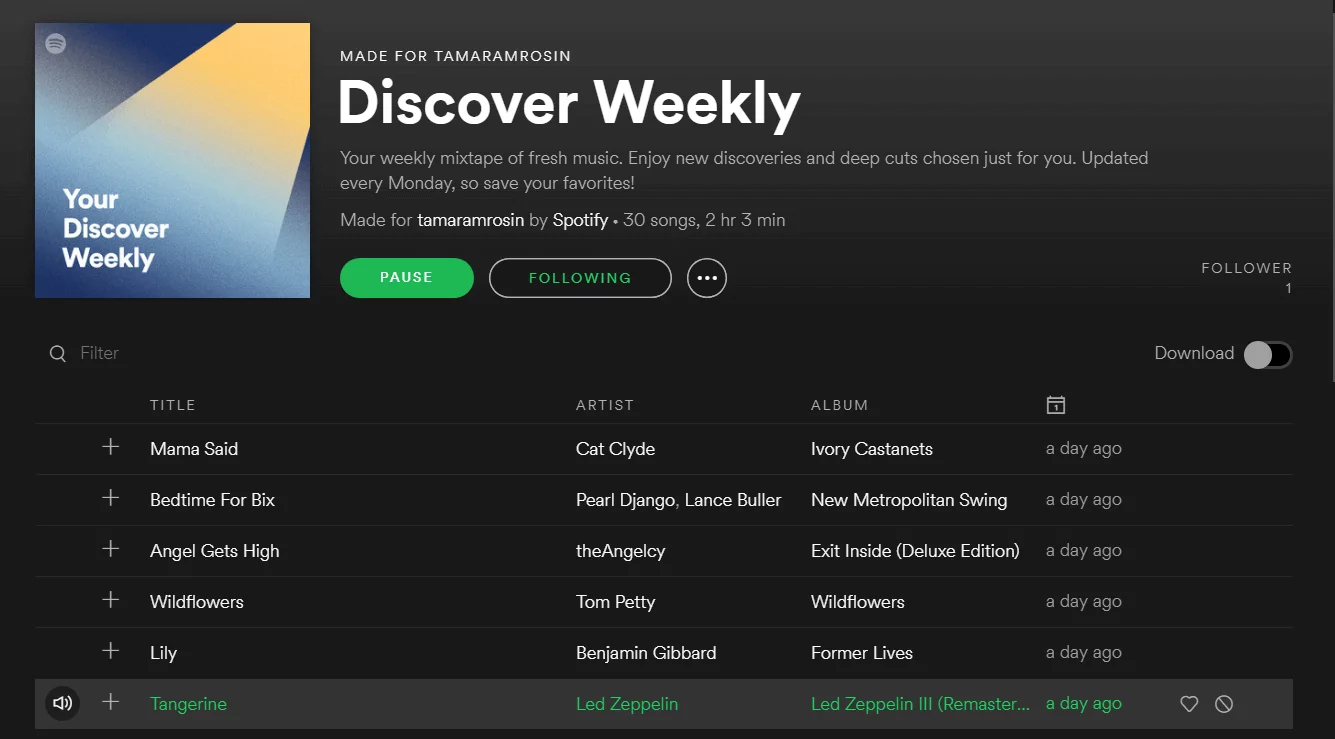

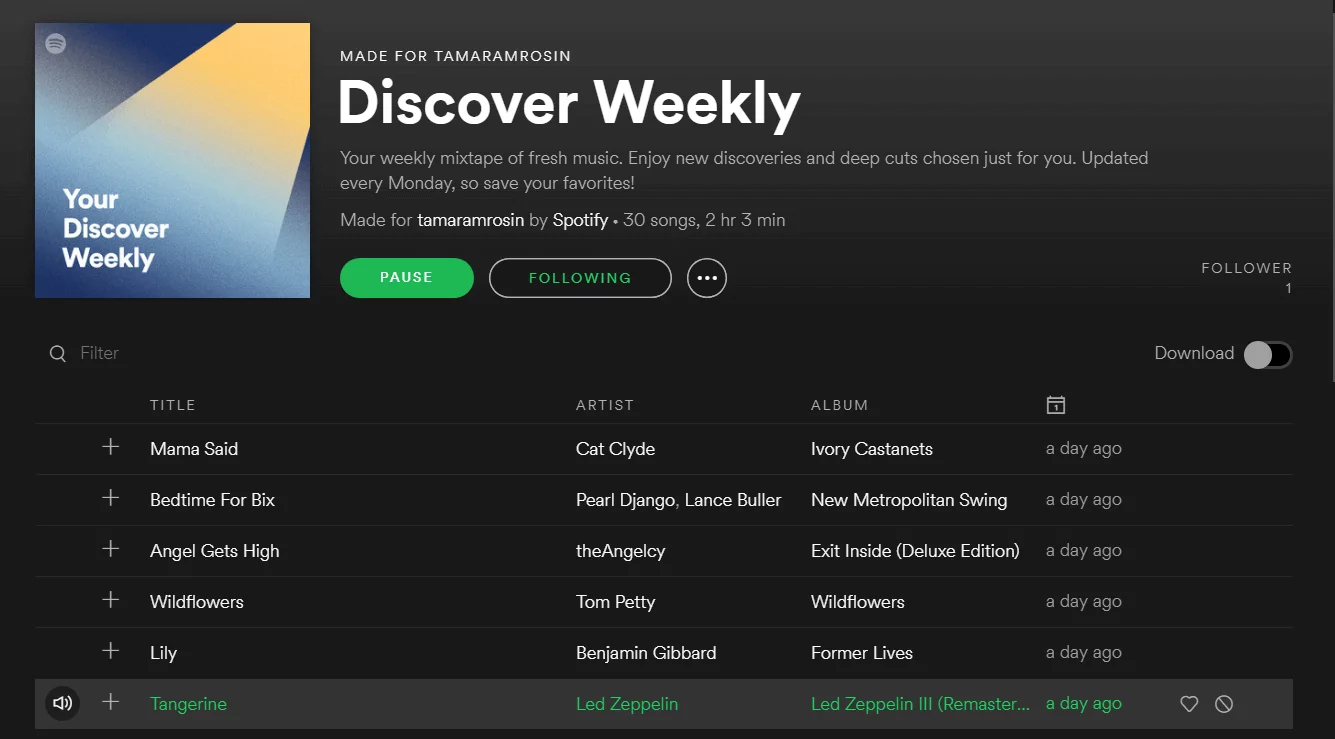

Your AI must respect that in the human-to-machine relationship, the human is boss. One UX design challenge is drawing attention to and building confidence in AI-generated content without making it too pushy. Spotify does a great job of this. It uses a subscriber’s listening history to create a playlist of new music he or she might like. The playlist appears at the top of your “Browse” page, but it’s not obtrusive. And the title works — “Discover Weekly.” It’s not called “Your New Favorite Tunes.” If it were, users would be more interested in challenging it than embracing it.