No longer relegated to the pages of fictitious sci-fi novels, modern iterations of artificial intelligence (AI) have graduated the expectations of computer scientists globally.

A new Google report reveals that AI is the most profound technology that humanity is working on today. It’s a critical part of solving big societal challenges, from tackling climate change to developing new personalized medicines.

Ever since the discipline was formally founded in the 1950s, AI has evolved by leaps and bounds and continues opening up new opportunities for value creation across a vast number of industries.

Harnessing the power of advanced machine learning (ML) and deep learning algorithms, AI has become a game-changer in the business ecosphere. It enables businesses to automate processes, streamline operations, and accelerate the speed of business. More importantly, it is crucial in decision intelligence, empowering enterprises to make data-driven decisions that significantly enhance efficiency and productivity.

According to Next Move Strategy Consulting, the Artificial Intelligence (AI) Market Size was valued at USD 95.60 billion in 2021 and is predicted to reach USD 1,847.58 billion by 2030, registering a CAGR of 32.9 between 2022 and 2030.

The development of AI can largely be attributed to the vast amounts of data now available and the powerful processing capabilities that enable its current applications. Taking Chat GPT as an example, which has reimagined the way we engage with technology, leading to notable performance improvement and enhancing our productivity margins.

However, it all begins with an AI model, the building blocks of any AI system. An AI model is a program or algorithm that enables machines to analyze data, identify patterns, and make predictions. By learning from their experiences, AI models can adapt to new situations and perform tasks similar to how a human would.

This article will explore AI models, first defining what an AI model is and its significance to businesses today. We’ll also distinguish the difference between AI and ML before pivoting into a discussion on the various types of ML and a look at some common AI models. Finally, we’ll walk you through the intriguing process of AI modeling and share our thoughts on the future of this developing field.

What is an AI model?

AI models are software systems that execute tasks without explicit instructions and human involvement.

AI architecture comprises algorithms and techniques such as rule-based systems, probabilistic reasoning, and neural networks to analyze large datasets and derive insights from them. These models frequently incorporate machine learning and deep learning techniques to make decisions and predictions based on the analysis.

An MIT Sloan Management report reveals that over half of all respondents confirm their companies are either piloting or deploying AI (57%), possess an AI strategy (59%), and comprehend how AI can generate business value (70%).

Additionally, the report discovered that 87% of global organizations believe AI technologies will provide them with a competitive advantage.

Additionally, AI models can be designed to learn and improve their performance over time. They can achieve greater data analysis and forecasting accuracy as the number of data points they are trained on increases. In other words, the more data an AI model is exposed to, the better it becomes.

While the current versions of AI significantly enhance industry capabilities, there’s still a considerable amount of untapped potential in the value AI can provide in the future.

AI continues to be incredibly valuable in a wide range of applications, from healthcare and finance to transportation and entertainment.

What is the value of AI to businesses?

Before we delve into the architectural components that make up an AI model, let’s explore AI’s value to businesses.

Artificial intelligence (AI) has emerged as a game-changer for organizations across multiple industries. The value of AI is realized in its ability to enhance operational efficiency, provide better customer experiences (CX), and deliver greater insights into business operations.

A significant advantage of incorporating AI into business operations is improving CX. AI-powered chatbots can provide accurate and timely responses to customer queries, offering personalized engagement that drives customer loyalty.

The usefulness of AI algorithms is further realized in their ability to analyze customer data to identify patterns and preferences, enabling businesses to create more targeted marketing campaigns for their products/services.

A Gartner survey found that one-third of organizations are implementing AI across multiple business units.

Another area where AI provides immense value to businesses is its ability to enhance operational efficiency. AI-powered automation of routine tasks can free up employees’ time, allowing them to focus on more critical tasks that require human input. This can improve productivity, cost savings, and faster turnaround times.

AI also provides valuable insights into business operations. By analyzing large datasets, AI algorithms can detect patterns and trends that are not immediately evident, providing businesses with a more comprehensive understanding of their operations. This information can enable companies to optimize processes, reduce costs, and make data-driven decisions.

The Gartner survey reveals how organizations are evolving their use of AI as part of their automation strategies, with 80% of executives believing that automation can be applied to any business decision.

What is the difference between AI and ML?

Despite the fields of Artificial Intelligence (AI) and Machine Learning (ML) being closely intertwined, they are not the same.

AI is a broad field to create machines that can perform tasks requiring human intelligence. This includes problem-solving, recognizing patterns, understanding natural language, and making decisions. An AI model, therefore, is a computational model designed to perform tasks that normally require human intelligence.

On the other hand, ML is a subset of AI that focuses on building algorithms that allow computers to learn from and make decisions or predictions based on data. Therefore, an ML model is trained on data and then used to predict future outcomes without being explicitly programmed.

As per Fortune Business Insights, the global Machine Learning (ML) market was valued at $19.20 billion as of 2022. It’s projected to expand to $26.03 billion in 2023 and continue its growth trajectory to reach an impressive $225.91 billion by 2030.

In essence, while machine learning models are inherently a part of artificial intelligence, it’s important to note that not all artificial intelligence models employ machine learning. Some AI models are based on pre-programmed rules and can’t learn from data.

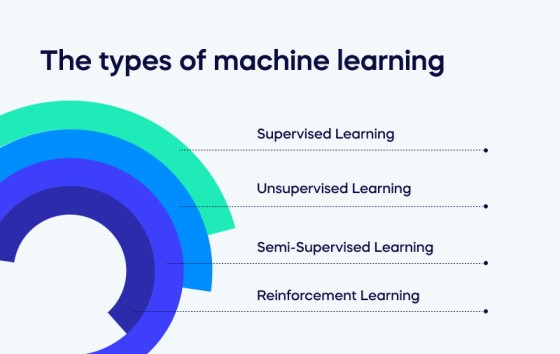

The types of machine learning

Before we delve into the various types of AI models, we must emphasize Machine Learning (ML) models.

Machine Learning (ML) is one of AI’s most influential and widely used subsets, given its ability to learn from data and improve over time without being explicitly programmed.

It drives many modern applications like recommendation systems, image recognition, natural language processing, and autonomous vehicles.

Let’s explore the most common Machine Learning models below:

- Supervised Learning: This is the most common type of machine learning. It involves training a model on a labeled dataset. In other words, both the input and the correct output are provided to the algorithm during the training process. The model aims to learn a mapping from inputs to outputs and make predictions on unseen data. Examples include Linear Regression, Decision Trees, and Support Vector Machines.

- Unsupervised Learning: Unlike supervised learning, unsupervised learning deals with unlabeled data. The goal is to find hidden patterns or intrinsic structures in the input data. Common techniques include clustering (grouping similar instances), anomaly detection (finding unusual instances), and dimensionality reduction (simplifying the input data without losing too much information). Examples include K-means clustering and Principal Component Analysis (PCA).

- Semi-Supervised Learning: This machine learning type lies between supervised and unsupervised learning. It deals with partially labeled data—most are unlabeled, while some are. Techniques in this category combine principles of both supervised and unsupervised learning.

- Reinforcement Learning: This is a type of machine learning where an agent learns how to behave in an environment by performing actions and receiving rewards or punishments. It’s about understanding the optimal policy. A policy defines the action the agent should choose when in a situation. Examples include various algorithms used in self-driving cars, video games, and robotics.

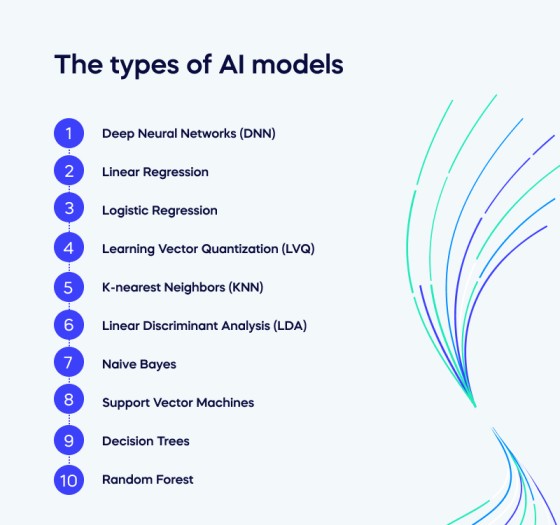

The types of AI models

As we delve further into Artificial Intelligence (AI), it’s crucial to understand the diverse types of AI models that serve as the foundation for this technology. Each AI model has unique characteristics, advantages, and applications, from simple linear regression models to complex deep neural networks.

These models are not just theoretical constructs; they are practical tools used in everyday applications, whether it’s the recommendation system of your favorite streaming service, the voice recognition in your virtual assistant, or the predictive analytics in healthcare diagnostics.

The following sections will explore some of the most common AI models.

- Deep Neural Networks (DNN): DNNs are a subset of AI that mimic the human brain’s neural networks to process data and create patterns used in decision-making. They are composed of multiple layers of artificial neurons and can learn to perform complex tasks with a high degree of accuracy.

- Linear Regression: Linear regression is a fundamental algorithm in statistics and machine learning. It predicts a continuous target variable based on one or more input features. The relationship between the input and the output is assumed to be linear.

- Logistic Regression: Despite its name, logistic regression is used for classification problems, not regression problems. It estimates the probability that an instance belongs to a particular class. If the estimated chance is greater than 50%, then the model predicts that the instance belongs to that class; otherwise, it indicates that it does not.

- Learning Vector Quantization (LVQ): LVQ is an artificial neural network that allows supervised learning to map input data to categorical output data. It’s particularly useful for classification problems when the input data is not linearly separable.

- K-nearest Neighbors (KNN): KNN is a simple, instance-based learning algorithm. It classifies new instances based on a similarity measure, usually distance functions like Euclidean or Manhattan distance.

- Linear Discriminant Analysis (LDA): LDA is a technique used in statistics, pattern recognition, and machine learning to find a linear combination of features that characterizes or separates two or more classes of objects or events.

- Naive Bayes: Naive Bayes classifiers are a family of simple “probabilistic classifiers” based on applying Bayes’ theorem with strong independence assumptions between the features. They are highly scalable and suited for very high-dimensional datasets.

- Support Vector Machines: SVMs are a powerful set of supervised learning methods for classification and regression. They’re well known for their kernel trick, which can model even non-linear relations.

- Decision Trees: Decision trees are a model used for classification and regression. They learn from data to approximate a sine curve with a set of if-then-else decision rules.

- Random Forest: Random forests are an ensemble learning method for classification, regression, and other tasks that operate by constructing a multitude of decision trees at training time and outputting the class that is the mode of the classes or mean prediction of the individual trees. They are robust against overfitting and often provide very accurate predictions.

AI modeling process

The true value of an AI model lies in the quality of training and data input it receives. For businesses to fully harness the potential of their AI systems, a thorough process of modeling, training, and inference is essential.

Modeling is the initial phase where an appropriate machine learning algorithm is selected based on the problem to be solved. This model acts as the framework for learning to recognize patterns from the data.

Training is the next crucial step in teaching this model to understand the data. It involves feeding the model with data, allowing it to adjust its internal parameters to fit the provided information best. The objective is to minimize the difference between the model’s predictions and the actual data.

Finally, it’s time for inference after the model has been sufficiently trained. This is when the model uses what it has learned to make predictions on new, unseen data. It applies the patterns recognized during the training phase to predict outcomes.

Therefore, for businesses aiming to leverage AI systems to their maximum potential, extensive modeling, training, and inference aren’t just recommended steps but indispensable components of a successful AI strategy.

The path ahead: The future of AI

Over the next three to five years, AI is expected to revolutionize business operations even further. Statista reports that more “than half of organizations are prioritizing artificial intelligence/machine learning initiatives in 2021. Nearly half of respondents claimed it was of high priority, and nearly a third claimed it was the top priority among IT projects in their organizations.”

However, it’s important to note that while AI holds immense promise, its adoption in enterprises has yet to become mainstream. The journey to realizing AI’s full potential involves overcoming challenges mainly related to data privacy, model transparency, and technology infrastructure.

The main challenges in implementing AI solutions include ensuring data privacy, as confidential information is often used to train AI models; maintaining model transparency so stakeholders understand how the AI makes decisions; establishing robust technology infrastructure necessary to support complex AI computations and large data volumes.

Conversely, AI’s biggest potential impact could be the creation of entirely new business models based on AI-driven insights. This could lead to the creation of innovative products and services, opening up new markets and revenue streams that deliver unprecedented value to customers.

Overall, the consensus is that AI, though still in its nascent stage, is poised to put business capabilities on a new trajectory—and continues to transform how businesses operate, innovate and deliver value.